Continuously improve data quality, workflow reliability, and scalability by exploring and implementing pioneering technologies and solutions.Design and implement reusable systems for extracting, transforming, and loading data from diverse sources into centralized data lakes and reporting warehouses.Develop, and maintain scalable data pipelines to ensure data integrity, monitoring, and lineage, handling large-scale daily event processing in our data lake and data warehouse.In addition, the system's functional requirements also drive us towards the use of rule engines and machine learning. You are someone who is passionate about system scalability, performance, and processing big volumes of data. Unity Ads enables developers to build a business through advertising, and in-app purchases.

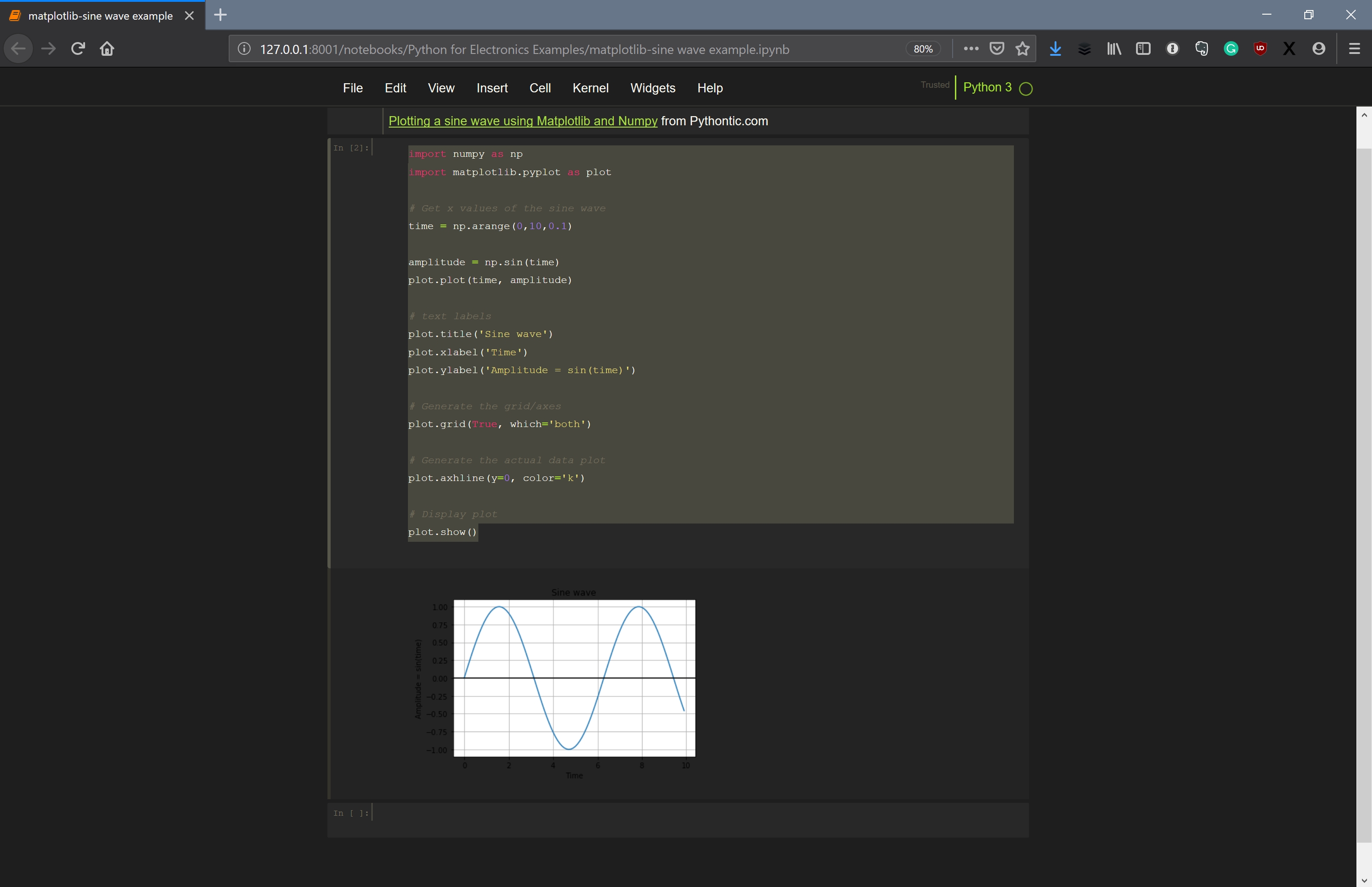

We are seeking a Data Engineer who will join a team focusing on building the next generation of Unity's Ads suite. The goal: to make our customers more successful and their consumers happier. Combining data from over a billion players with sophisticated deep learning technology and in-house proprietary analytics. We are hard at work integrating progressive monetization systems deep into the core of Unity's engine. We put the most creative tools in the hands of millions of developers and artists through our foundational principle of Democratization of Development. Unity powers over half of all the world's games and over two thirds of VR and AR products. datetime ( 2022, 1, 1 ), schedule =, tags =, ) as dag : start = EmptyOperator ( task_id = "start", ) section_1 = SubDagOperator ( task_id = "section-1", subdag = subdag ( DAG_NAME, "section-1", dag. Defaults to """ get_ip = GetRequestOperator ( task_id = "get_ip", url = "" ) ( multiple_outputs = True ) def prepare_email ( raw_json : dict ) -> dict : external_ip = raw_json return, start_date = datetime. datetime ( 2021, 1, 1, tz = "UTC" ), catchup = False, tags =, ) def example_dag_decorator ( email : str = ): """ DAG to send server IP to email. Schedule interval put in place, the logical date is going to indicate the timeĪt which it marks the start of the data interval, where the DAG run’s startĭate would then be the logical date + scheduled ( schedule = None, start_date = pendulum. However, when the DAG is being automatically scheduled, with certain Logical is because of the abstract nature of it having multiple meanings,ĭepending on the context of the DAG run itself.įor example, if a DAG run is manually triggered by the user, its logical date would be theĭate and time of which the DAG run was triggered, and the value should be equal (formally known as execution date), which describes the intended time aĭAG run is scheduled or triggered. Run’s start and end date, there is another date called logical date This period describes the time when the DAG actually ‘ran.’ Aside from the DAG Tasks specified inside a DAG are also instantiated intoĪ DAG run will have a start date when it starts, and end date when it ends. In much the same way a DAG instantiates into a DAG Run every time it’s run,

Run will have one data interval covering a single day in that 3 month period,Īnd that data interval is all the tasks, operators and sensors inside the DAG Those DAG Runs will all have been started on the same actual day, but each DAG The previous 3 months of data-no problem, since Airflow can backfill the DAGĪnd run copies of it for every day in those previous 3 months, all at once. It’s been rewritten, and you want to run it on Same DAG, and each has a defined data interval, which identifies the period ofĪs an example of why this is useful, consider writing a DAG that processes aĭaily set of experimental data. If schedule is not enough to express the DAG’s schedule, see Timetables.įor more information on logical date, see Data Interval andĮvery time you run a DAG, you are creating a new instance of that DAG whichĪirflow calls a DAG Run. For more information on schedule values, see DAG Run.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed